apple differential privacy

|

Differential Privacy Overview

The Apple differential privacy implementation incorporates the concept of a per-donation privacy budget (quantified by the parameter epsilon) and sets a strict limit on the number of contributions from a user in order to preserve their privacy |

|

Learning with Privacy at Scale

Abstract Understanding how people use their devices often helps in improving the user experience However accessing the data that provides such insights — for example what users type on their keyboards and the websites they visit — can compromise user privacy We design a system architecture that enables learning at scale by leveraging local diff |

|

Privacy Loss in Apples Implementation of Differential

In June 2016 Apple made a bold announcement that it will deploy local diferential privacy for some of their user data collection in order to ensure privacy of user data even from Apple [21 23] The details of Apple’s approach remained sparse |

What are local differentially private algorithms?

We now describe three local differentially private algorithms in the following sections. The Private Count Mean Sketch algorithm (CMS) aggregates records submitted by devices and outputs a histogram of counts over a dictionary of domain elements, while preserving local differential privacy.

What is differential privacy?

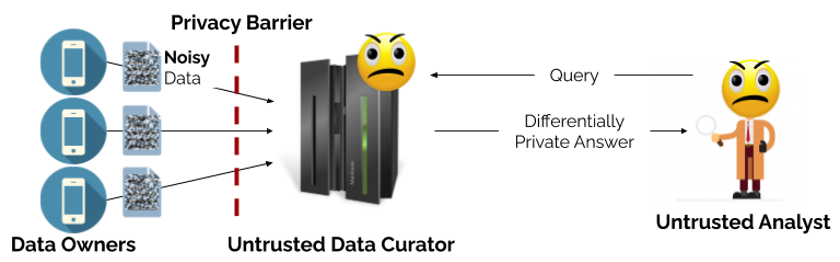

In general, differential privacy is defined for algorithms with input databases of size larger than 1. In the local model of differential privacy, algorithms may only access the data via the output of a local randomizer so that no raw data is stored on a server. Formally: Definition 3.2 (Local Differential Privacy ). Let A : Dn !

Can information design solve the problem of differentially private data publication?

Our analysis introduces the tools of information design to the problem of differentially private data publication. Several applications suggest generalizations of our setting. First, in many settings, data users interact with one another, instead of making inde-pendent decisions.

Does a system architecture combine differential privacy and privacy best practices?

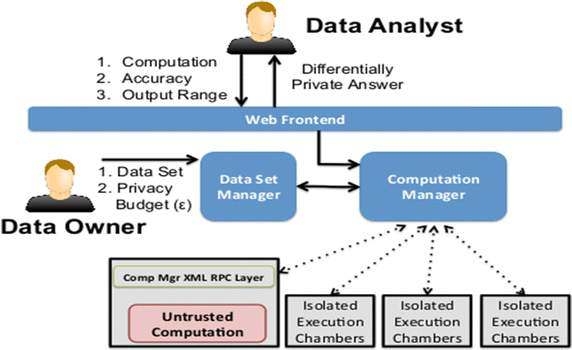

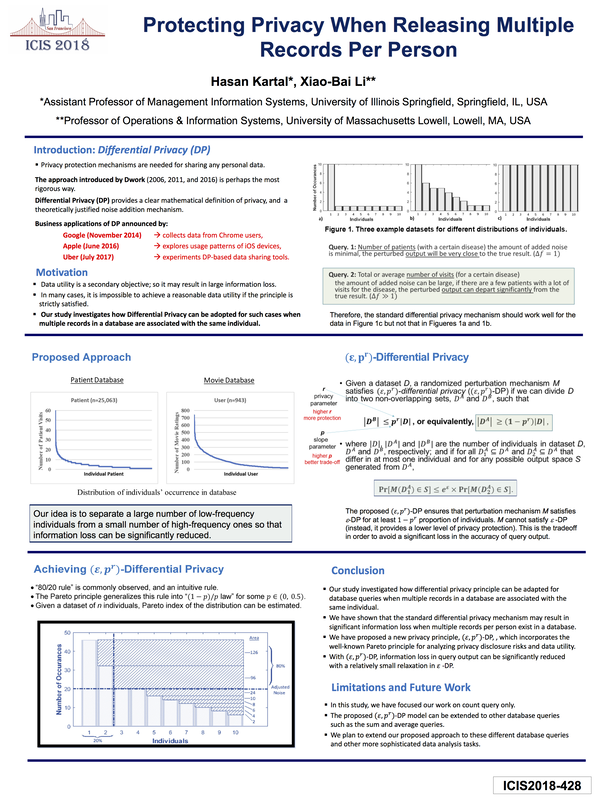

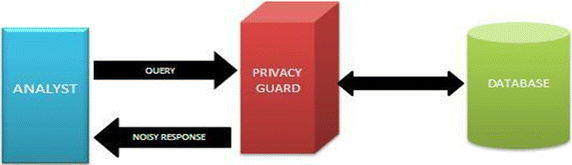

In this paper, we describe a system architecture that combines differential privacy and privacy best practices to learn from a user population addressing both privacy and practical deployment concerns. Differential privacy provides a mathematically rigorous definition of privacy and is one of the strongest guarantees of privacy available.

Differential Privacy Team, Apple

Abstract Understanding how people use their devices often helps in improving the user experience. However, accessing the data that provides such insights — for example, what users type on their keyboards and the websites they visit — can compromise user privacy. We design a system architecture that enables learning at scale by leveraging local diff

1 Introduction

Gaining insight into the overall user population is crucial to improving the user experience. For example, what new words are trending? Which health categories are most popular with users? Which websites cause excessive energy drain? The data needed to derive such insights is personal and sensitive, and must be kept private. In addition to privacy

3.1 Differential Privacy

We first define a local randomizer which we will use in the definition of local differential privacy. docs-assets.developer.apple.com

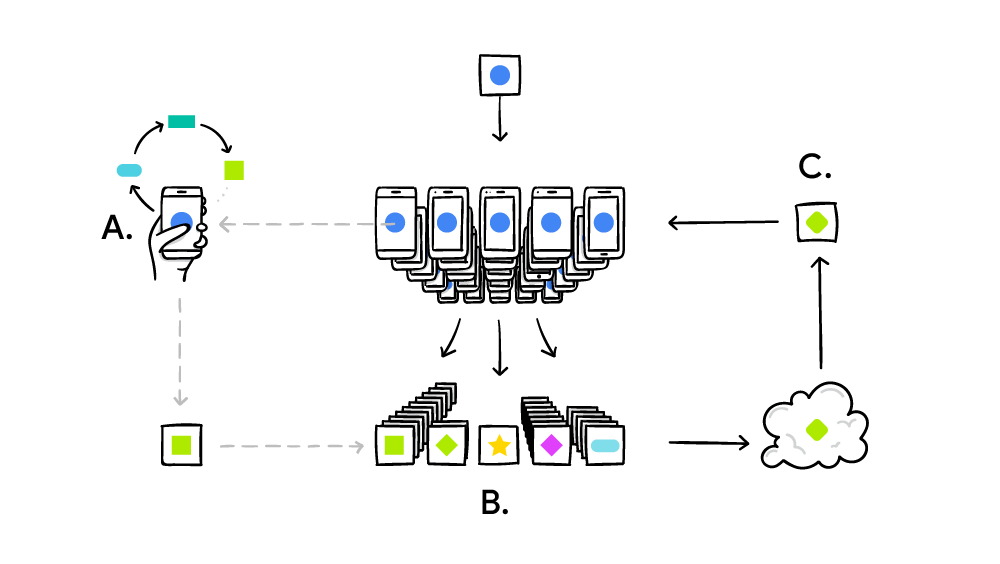

3.2 System Architecture

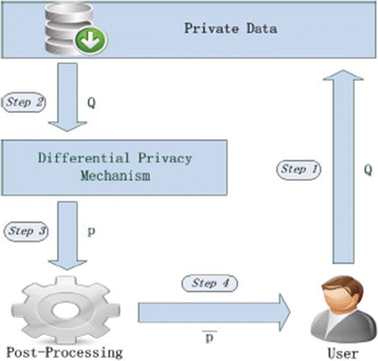

Our system architecture consists of device- and server-side data processing. On the device, the privatization stage ensures raw data is made differentially private. The restricted-access server does data processing that can be further divided into the ingestion and aggregation stages. We explain each stage in detail below. User device Restricted-ac

3.2.2 Ingestion and Aggregation

The privatized records are first stripped of their IP addresses prior to entering the ingestor. The ingestor then collects the data from all users and processes them in a batch. The batch process removes metadata, such as the timestamps of privatized records received, and separates these records based on their use case. The ingestor also randomly p

4 Algorithms

Of relevance to our algorithms is the count sketch algorithm [4] which finds frequently reported data ele-ments along with accurate counts from a stream. We also use a sketch matrix data structure to compute counts for a collection of privatized domain elements. However, to ensure differential privacy, our algo-rithms deviate significantly. We next

4.1 Private Count Mean Sketch

We develop a local differentially private algorithm that outputs a histogram of counts over domain for a dataset consisting of n records. At a high level, Count Mean Sketch (CMS) is composed of a client-side D algorithm and a server-side algorithm. The client-side algorithm ensures that the data that leaves the user’s device is ✏-differentially pri

H) = ( ̃v, j)]

H) = ( ̃v, j)]◆ Because CMS is a post-processing function of the outputs from CMS, we then have that CMS is ✏-local differentially private. Aclient docs-assets.developer.apple.com

H) = ( ̃v, j)]

H) = ( ̃v, j)]◆ ✏. Because HCMS is a post-processing function of the outputs from Aclient HCMS, we then have that HCMS is ✏-local differentially private. docs-assets.developer.apple.com

6 Results

We deploy our algorithms across hundreds of millions of devices. We present results for the following use cases: New Words, Popular Emojis, Video Playback Preferences (Auto-play Intent) in Safari, High Energy and Memory Usage in Safari, and Popular HealthKit Usage. docs-assets.developer.apple.com

7 Conclusion

In this paper, we have presented a novel learning system architecture, which leverages local differential privacy and combines it with privacy best practices. To scale our system across millions of users and a variety of use cases, we have developed local differentially private algorithms – CMS, HCMS, and SFP – for both the known and unknown dictio

j = {d(i) 1 = d} +

· {d(i) = d}. We first prove the case when d(i) = d. We will use the property of the Hadamard matrix that its columns are orthogonal. docs-assets.developer.apple.com

|

Differential Privacy Overview

The differential privacy technology used by Apple is rooted in the idea that statistical noise that is slightly biased can mask a user's individual data before |

|

Learning with Privacy at Scale

Note that the privatized data is elided here for presentation; the full size in this example is 128 bytes. 4. Page 5. "key": "com.apple.keyboard.Emoji.en_US |

|

DP Tech Brief FINAL 10-11-2017

10 nov. 2017 The differential privacy technology developed by Apple is rooted in the idea that statistical noise that is slightly biased can mask a user's ... |

|

Safari Privacy Overview

Safari is the built-in browser on Mac iPhone |

|

Privacy Loss in Apples Implementation of Differential Privacy on

10 sept. 2017 ABSTRACT. In June 2016 Apple made a bold announcement that it will deploy local differential privacy for some of their user data collection ... |

|

Privacy at Scale: Local Differential Privacy in Practice

technology organizations including Google |

|

The Limits of Differential Privacy (and its Misuse in Data Release

4 nov. 2020 Google Apple and Facebook have seen the chance to collect or release microdata (individual respondent data) from their users under the privacy ... |

|

Local Differential Privacy for Sampling

DP by Apple (Differential privacy team Apple |

|

Local Differential Privacy and trade-off with Utility

Privacy level n statistical analyses. Individual sanitized data. Local Differential Privacy. 9. Apple. QoS. Statistical Utility |

|

Frequency Estimation under Local Differential Privacy

ployed by large technology companies such as Apple |

|

Differential Privacy Overview - Apple

It is a technique that enables Apple to learn about the user community without learning about individuals in the community Differential privacy transforms the |

|

Learning with Privacy at Scale - Apple

Data is privatized on the user's device using event-level differential privacy [7] in the local model where an event might be, for example, a user typing an emoji |

|

Local Differential Privacy for Evolving Data - NIPS Proceedings

In this paper, we introduce a new technique for local differential privacy that the privacy parameters guaranteed by Apple's implementation of differentially |

|

Local Differential Privacy in Practice - DIMACS

Local differential privacy (LDP), where users randomly perturb their inputs to technology organizations, including Google, Apple and Microsoft This tutorial |

|

Orchard: Differentially Private Analytics at Scale - USENIX

6 nov 2020 · differential privacy to monitor the Chrome web browser [31], and Apple is using it in iOS and macOS, e g , to train its models for predictive typing |

|

Part 5: Differentially Private Data Mining and - Yin David Yang

Differential Privacy in Data Publication and Analysis Part 5: Alice apple banana cherry melon Bob (apple) and (melon) are two frequent patterns, |

|

The Role of Differential Privacy in GDPR Compliance - GitLab

For example, recent work advocated that Apple's choice of parameters in their implementation of differential privacy provided insufficient privacy to users [11] The |

|

Selecting a Differential Privacy Level for Accuracy - CORE

Traditional approaches to differential privacy assume a fixed privacy recent set of large-scale practical deployments, including by Google [10] and Apple [11] |

![PDF] Privacy Loss in Apple's Implementation of Differential PDF] Privacy Loss in Apple's Implementation of Differential](https://d3i71xaburhd42.cloudfront.net/4342a1109fef5aaaf438de2ffcb82d5a71a8ab95/4-Table2-1.png)

![PDF] Privacy Loss in Apple's Implementation of Differential PDF] Privacy Loss in Apple's Implementation of Differential](https://d3i71xaburhd42.cloudfront.net/4342a1109fef5aaaf438de2ffcb82d5a71a8ab95/4-Table1-1.png)

![PDF] Privacy Loss in Apple's Implementation of Differential PDF] Privacy Loss in Apple's Implementation of Differential](https://d3i71xaburhd42.cloudfront.net/4342a1109fef5aaaf438de2ffcb82d5a71a8ab95/9-Figure10-1.png)

![PDF] Privacy Loss in Apple's Implementation of Differential PDF] Privacy Loss in Apple's Implementation of Differential](https://2672686a4cf38e8c2458-2712e00ea34e3076747650c92426bbb5.ssl.cf1.rackcdn.com/2017-09-15-12-51-44.jpeg)

![PDF] Privacy Loss in Apple's Implementation of Differential PDF] Privacy Loss in Apple's Implementation of Differential](https://www.eyerys.com/sites/default/files/local-differential-privacy.png)

![PDF] Privacy Loss in Apple's Implementation of Differential PDF] Privacy Loss in Apple's Implementation of Differential](https://www.maketecheasier.com/assets/uploads/2020/03/differential-privacy-survey-data.jpg)