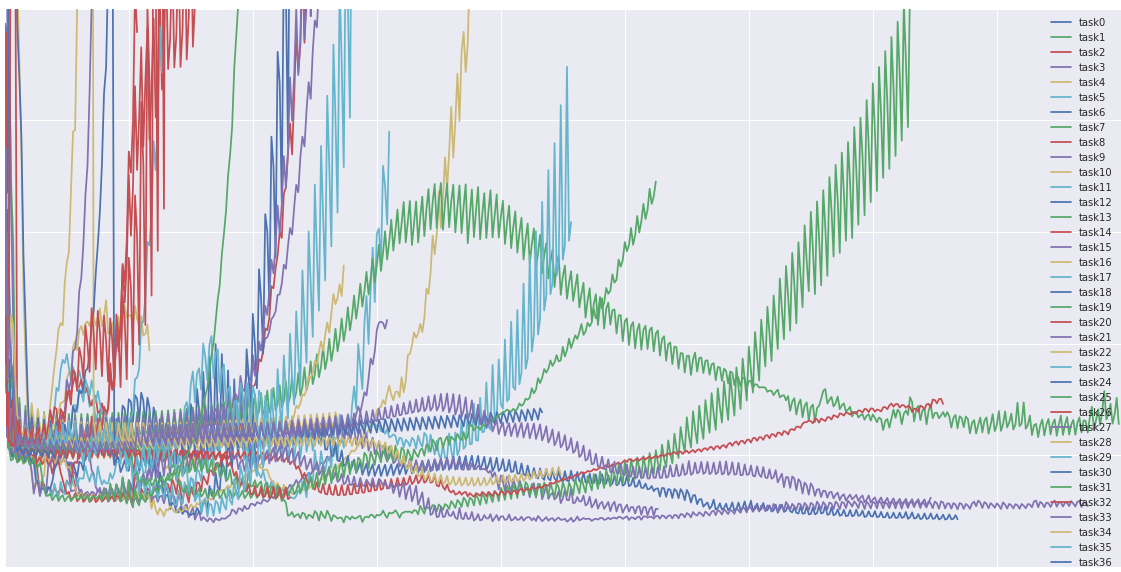

increase the batch size

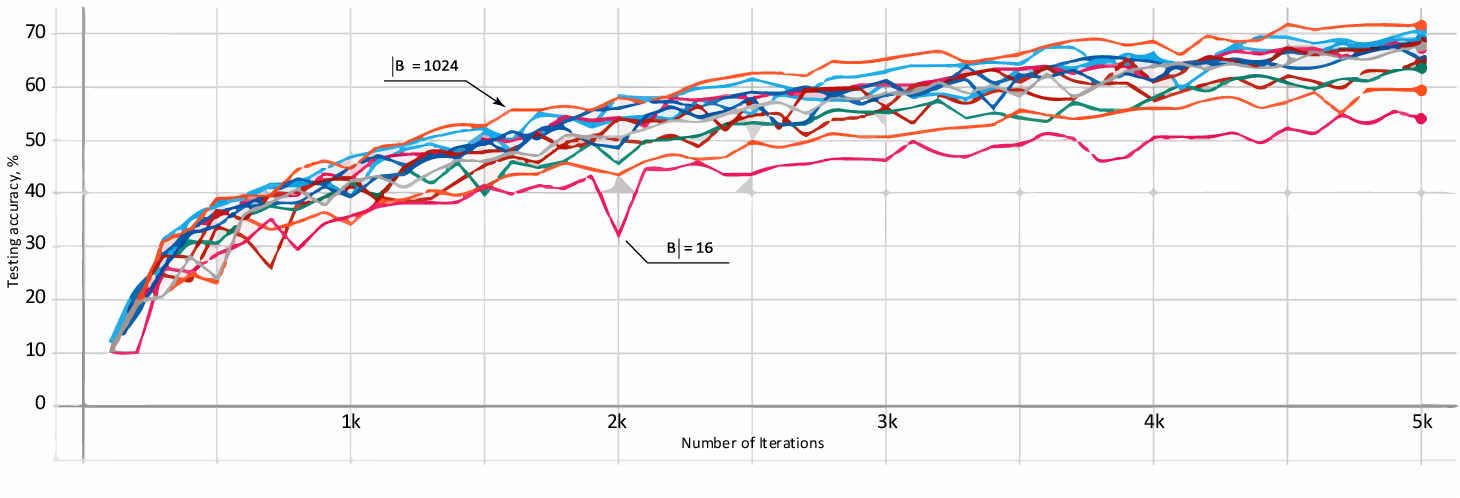

Why is a small batch better than a large batch?

To conclude, and answer your question, a smaller mini-batch size (not too small) usually leads not only to a smaller number of iterations of a training algorithm, than a large batch size, but also to a higher accuracy overall, i.e, a neural network that performs better, in the same amount of training time, or less.

Why do we increase batch size during training?

instead of decaying the learning rate, we increase the batch size during training. This strategy achieves near-identical model performance on the test set with the same number of training epochs but significantly fewer parameter updates.

How to increase batch size?

It can be seen from the table that manual adjustment gives a small increase in the size of the batch, and Optimal Gradient Checkpointing (n exp = 9) allows you to increase it more than two-fold, with an insignificant slowdown in the learning process. Gradient accumulation is one of the simplest techniques to increase batch size.

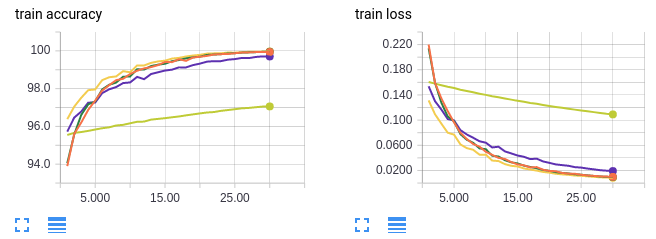

Can a batch size increase a learning curve?

DON’T DECAY THE LEARNING RATE, INCREASE THE BATCH SIZE It is common practice to decay the learning rate. Here we show one can usually obtain the same learning curve on both training and test sets by instead increasing the batch size during training.

Calculation of Batch Size

How to calculate large batch sizes

Finding the Optimal Batch Size

|

DONT DECAY THE LEARNING RATE INCREASE THE BATCH SIZE

Large batches can be parallelized across many machines reducing training time. Unfortunately |

|

On the Computational Inefficiency of Large Batch Sizes for

30 nov. 2018 Increasing the mini-batch size for stochastic gradient descent offers significant opportunities to reduce wall-clock training. |

|

Optimization of Batch Sizes

Smaller batch sizes in combination with equally sized batches were however shown to decrease the variation in the production plan and to result in increased |

|

An Empirical Model of Large-Batch Training

14 déc. 2018 In an increasing number of domains it has been demonstrated that deep learning models can be trained using relatively large batch sizes ... |

|

Increasing the Batch Size of a QESD Crystallization by Using a

9 juin 2022 A disadvantage of QESD crystallizations is that the particle size of the agglomerates decreases with an increased solvent fraction of the mother ... |

|

Revisiting Small Batch Training for Deep Neural Networks

20 avr. 2018 value of the weight update per gradient calculation constant while increasing the batch size implies a linear increase of the variance of ... |

|

Improving Scalability of Parallel CNN Training by Adjusting Mini

Such small batch sizes make it challenging to scale up the training. III. CNN TRAINING WITH ADAPTIVE BATCH SIZE AND. LEARNING RATE. In this section we discuss |

|

Augment your batch: better training with larger batches

27 janv. 2019 In this work we propose Batch Augmentation (BA) |

|

Guideline on stability testing for applications for variations to a

21 mars 2014 (B.II.b.4.d) Change in the batch size (including batch size ... product: introduction or increase in the overage that is used for the active. |

|

Large Batch Optimization for Object Detection: Training COCO in 12

Nevertheless it is not trivial to directly increase the batch size as large batch size training always suffers from performance degradation [8]. |

|

Control Batch Size and Learning Rate to Generalize Well - NeurIPS

training strategy that we should control the ratio of batch size to learning rate not operations, which would not change the geometry of objective function and |

|

Which Algorithmic Choices Matter at Which Batch Sizes? - NIPS

Increasing the batch size is one of the most appealing ways to accelerate neural network training on data parallel hardware Larger batch sizes yield better |

|

Why Does Large Batch Training Result in Poor - HUSCAP

that gradually increases batch size during training We also explain why large batch training degrades generalization performance, which previ- ous studies |

|

Dynamically Adjusting Transformer Batch Size by Monitoring

In this paper, we analyze how increasing batch size affects gradient direction, and propose to evaluate the stability of gradients with their an- gle change |

|

On the Generalization Benefit of Noise in Stochastic Gradient Descent

constant and increases the batch size, even if one continues training until the loss ceases to fall A number of recent pa- pers have studied this effect (Smith Le, |

|

CROSSBOW: Scaling Deep Learning with Small Batch Sizes on

GPUs, systems must increase the batch size, which hinders statistical efficiency Users tune hyper-parameters such as the learning rate to compensate for this |

![PDF] Impact of Training Set Batch Size on the Performance of PDF] Impact of Training Set Batch Size on the Performance of](https://ars.els-cdn.com/content/image/1-s2.0-S2405959519303455-gr1.jpg)