l1 linear regression

|

L1-regularized linear regression: Persistence and oracle inequalities5

Feb 4 2011 l1-regularized linear regression: Persistence and oracle inequalities5. Peter L. Bartlett1 |

|

A framework to efficiently smooth L1 penalties for linear regression

Sep 19 2020 Keywords: Elastic net; Fista; Fused Lasso |

|

Differentially Private l1-norm Linear Regression with Heavy-tailed

Jan 10 2022 Specifically |

|

CSC 411 Lecture 6: Linear Regression

CSC 411: 06-Linear Regression The L1 norm or sum of absolute values |

|

Fast Active-set-type Algorithms for L1-regularized Linear Regression

L1-regularized linear regression also known as the. Lasso (Tibshirani |

|

A framework to efficiently smooth L1 penalties for linear regression

Sep 19 2020 Keywords: Elastic net; Fista; Fused Lasso |

|

L1pack: Routines for L1 Estimation

Description L1 estimation for linear regression density |

|

A framework to efficiently smooth L1 penalties for linear regression

Dec 27 2020 Keywords: Elastic net; Fista; Fused Lasso |

|

Ising Model Selection Using l1-Regularized Linear Regression: A

In this paper we focus on one simpler linear estimator called l1-regularized linear regression (l1-. LinR) and theoretically investigate its typical |

|

L1 penalized LAD estimator for high dimensional linear regression

In this paper the high-dimensional sparse linear regression model is other methods |

|

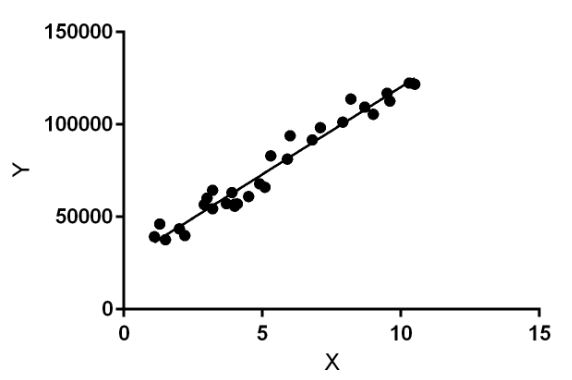

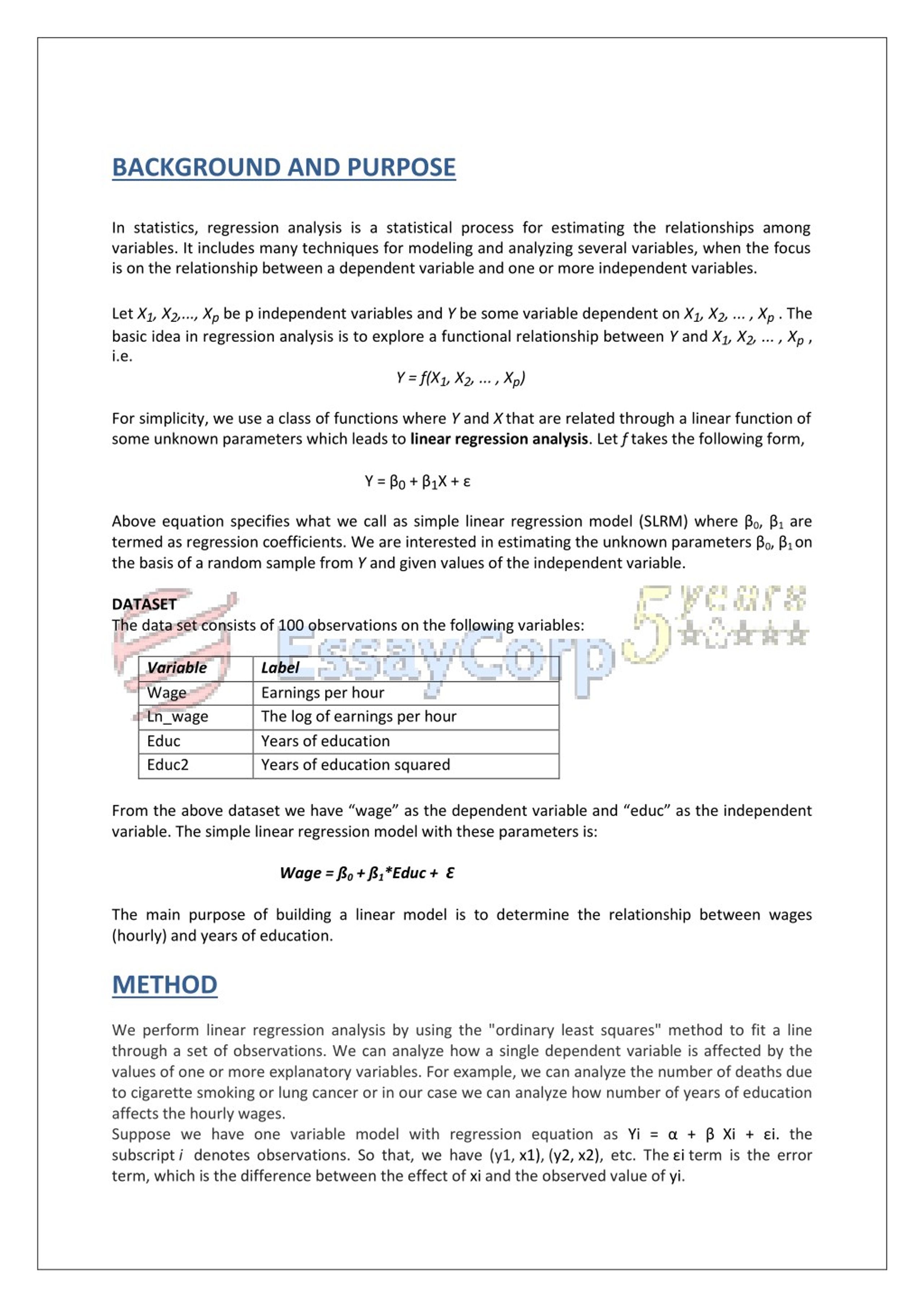

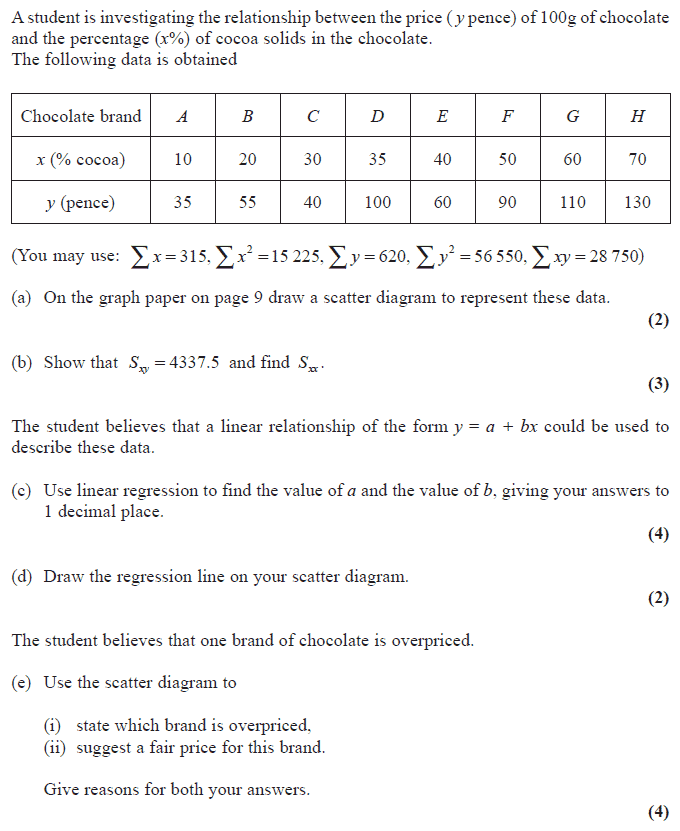

Simple Linear Regression

Linear Regression Model The simplest deterministic mathematical relationship between two variables x and y is a linear relationship: y = ?0 |

|

Chapter 2 Simple Linear Regression Analysis - IIT Kanpur

The simple linear regression model We consider the modelling between the dependent and one independent variable When there is only one |

|

Chapter 9 Simple Linear Regression - Statistics & Data Science

Simple Linear Regression An analysis appropriate for a quantitative outcome and a single quantitative ex- planatory variable 9 1 The model behind linear |

|

Linear Regression (1/1/17) - csPrinceton

1 jan 2017 · Our goal in linear regression is to estimate the coefficients including a slope and an intercept describing the relationship between X and Y : |

|

Week 5: Simple Linear Regression

One useful derivation is to write the OLS estimator for the slope as a weighted sum of the outcomes ??1 = n ? i=1 Wi Yi Where |

|

Chapitre 4 : Régression linéaire

La variable Y est appelée variable dépendante ou variable à expliquer et les variables Xj (j=1 q) sont appelées variables indépendantes ou variables |

|

Simple Linear Regression - Kosuke Imai

Kosuke Imai Princeton University POL572 Quantitative Analysis II Spring 2016 Kosuke Imai (Princeton) Linear Regression POL572 Spring 2016 1 / 64 |

|

1 Simple Linear Regression I – Least Squares Estimation

The main reasons that scientists and social researchers use linear regression are the following: 1 Prediction – To predict a future response based on known |

|

Lecture 9: Linear Regression

Why Linear Regression? • Suppose we want to model the dependent variable Y in terms of three predictors X 1 X 2 X 3 Y = f(X 1 X |

|

Linear regression

i=1(yi ? ¯y)2 is the total sum of squares • It can be shown that in this simple linear regression setting that R2 = r2 where r is the correlation between |

What is linear regression analysis PDF?

Linear regression is a statistical procedure for calculating the value of a dependent variable from an independent variable. Linear regression measures the association between two variables. It is a modeling technique where a dependent variable is predicted based on one or more independent variables.What is single linear regression?

Simple linear regression is a regression model that estimates the relationship between one independent variable and one dependent variable using a straight line. Both variables should be quantitative.What is ?1?

?0 is also called intercept (value. of EY when X = 0); ?1 is called slope indicating the change of Y on average when. X increases one unit.- The equation has the form Y= a + bX, where Y is the dependent variable (that's the variable that goes on the Y axis), X is the independent variable (i.e. it is plotted on the X axis), b is the slope of the line and a is the y-intercept.

|

Régression régularisée - Ridge, Lasso, Elasticnet - Université

RÉGRESSION LINÉAIRE MULTIPLE Régression – Exemple : consommation des véhicules Variable cible Contrainte sur la norme L1 des coefficients |

|

A Survey of L1 Regression - Computational Intelligence Group

L1-regularized methods for linear regression, generalized linear models, and time series analysis Although this review targets practice rather than theory, we do |

|

Least Squares Optimization with L1-Norm Regularization

This project surveys and examines optimization ap- proaches proposed for parameter estimation in Least Squares linear regression models with an L1 penalty |

|

Regularized Linear Regression

28 oct 2019 · Ridge Regression: Lasso: Lasso (l1 penalty) results in sparse solutions – vector with more zero coordinates Good for high-dimensional problems |

|

Linear Regression - Department of Computer Science, University of

CSC 411 Lecture 6: Linear Regression CSC 411: 06-Linear Regression The L1 norm, or sum of absolute values, is another regularizer that encourages |

|

Linear Methods 1 Introduction

linear regression model as above, with less than 3 points per parameter, we are Least-squares regression with l1 regularization is called lasso regression |

|

Sparsity

Reduced rank regression Topics: L1-regularized least square linear regression (LASSO): L1-regularization with a convex loss function is a convex |

|

Solving 1-Regularized Regression Problems - UW-Madison

Solving l1-Regularized Regression Problems Stephen Wright Often need to solve for multiple values of τ e g to adjust sparsity to some desired level or |

![Weisberg S Applied Linear Regression [PDF] - Все для студента Weisberg S Applied Linear Regression [PDF] - Все для студента](https://www.coursehero.com/thumb/9c/09/9c09f0cab38dd00a8892698fb39233b80539ffd0_180.jpg)

![PDF] Introduction to Linear Regression Analysis by Douglas C PDF] Introduction to Linear Regression Analysis by Douglas C](https://0.academia-photos.com/attachment_thumbnails/57954626/mini_magick20181209-15809-r66lx9.png?1544354083)

![PDF] Applied Linear Regression Models- 4th Edition with Student CD ( PDF] Applied Linear Regression Models- 4th Edition with Student CD (](https://i1.rgstatic.net/publication/275996804_Bayes_Signification_Tests_in_Linear_Regression_and_Economic_Applications/links/5559e45108ae6fd2d82813e7/largepreview.png)

![P232Book] PDF Download Linear Regression Analysis By George A F P232Book] PDF Download Linear Regression Analysis By George A F](https://img.yumpu.com/24038821/1/500x640/applications-of-regression-to-traffic-models-pdf.jpg)

![PDF] Solutions Manual to accompany Introduction to Linear PDF] Solutions Manual to accompany Introduction to Linear](https://www.techprofree.com/wp-content/uploads/2021/01/Linear-Regr.png)

![Neutrosophic Correlation and Simple Linear Regression - [PDF Document] Neutrosophic Correlation and Simple Linear Regression - [PDF Document]](https://slideplayer.com/slide/12934351/78/images/2/Uses+of+regression.jpg)