targeted adversarial attack pytorch

|

Torchattacks : A Pytorch Repository for Adversarial Attacks

19 févr. 2021 To change the attack into the targeted mode with the target class y? g(x) = max(maxi=y? f(x)i ? f(x)y? |

|

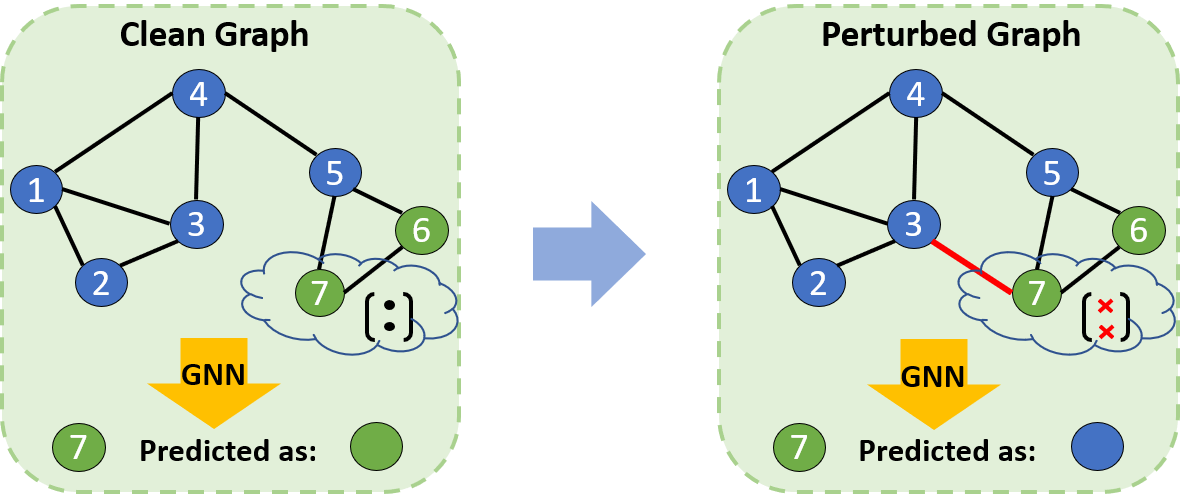

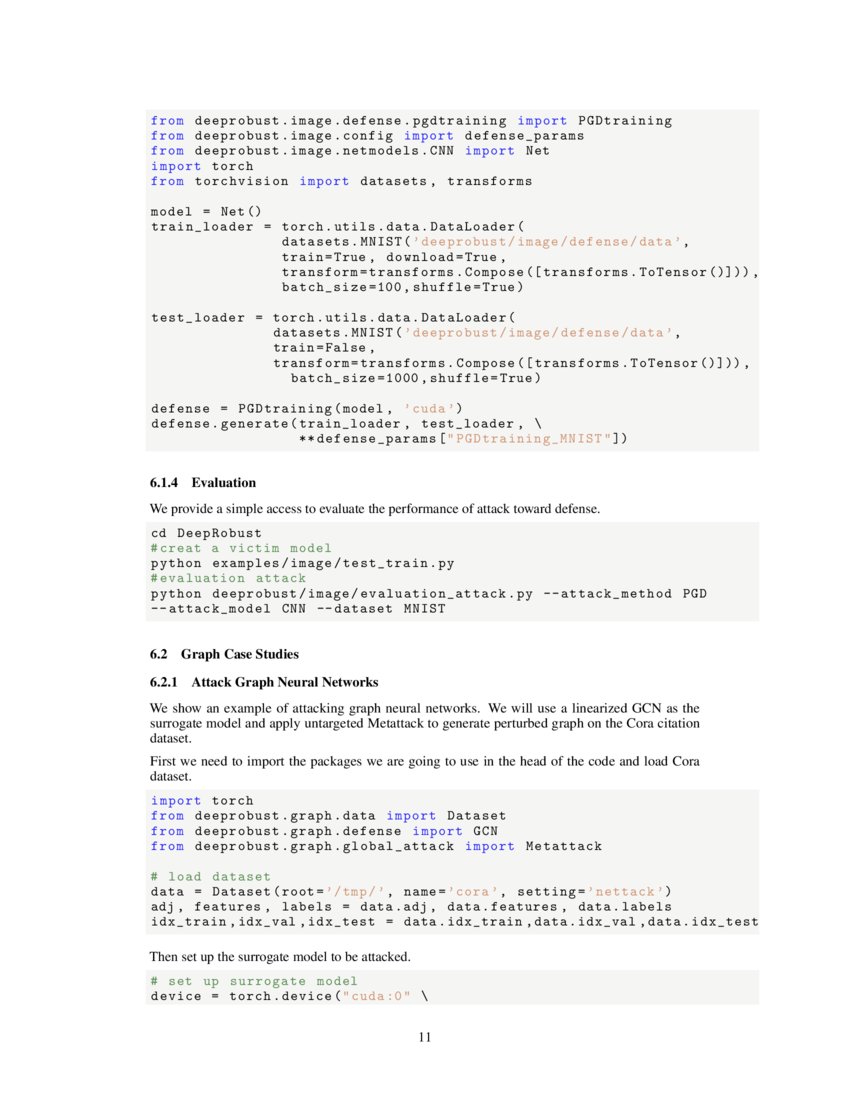

DeepRobust: A PyTorch Library for Adversarial Attacks and Defenses

13 mai 2020 Non-Targeted Attack: If there is no specific t given an adversarial example can be viewed a successful attack as long as it is classified ... |

|

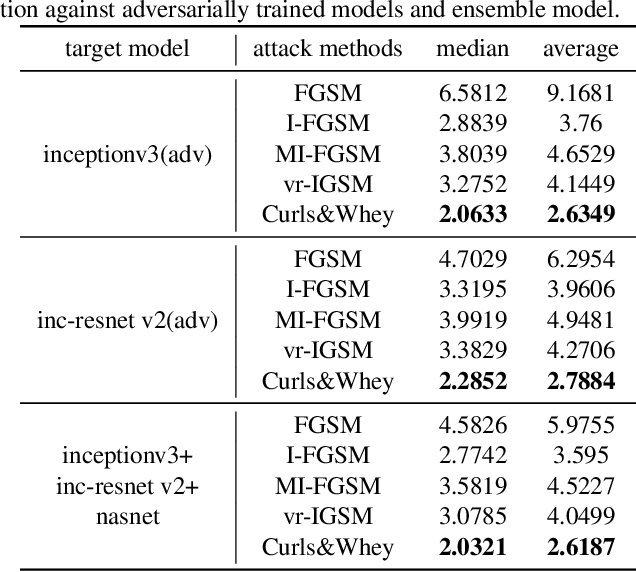

BLACK-BOX ADVERSARIAL ATTACK WITH TRANS- FERABLE

By applying a standard black-box attack method such as NES on the embedding space adversarial perturbations can be found efficiently for a target model. It is |

|

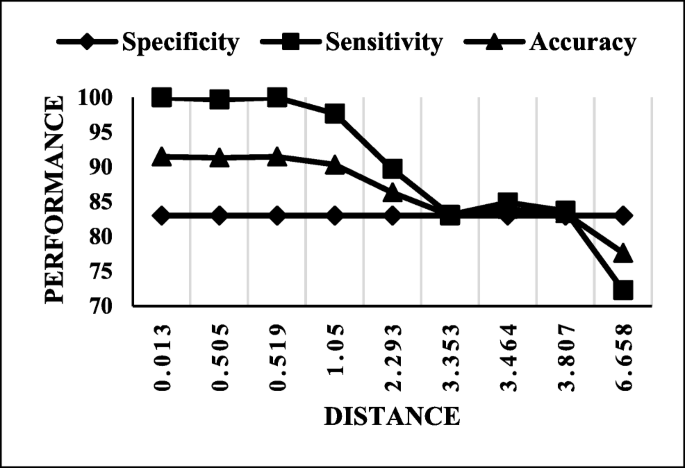

Evaluation of Momentum Diverse Input Iterative Fast Gradient Sign

the adversarial image and in the targeted attack the goal is to miss-classify For implementation and simulate the adversarial attack |

|

Simple Black-box Adversarial Attacks

used for both untargeted and targeted attacks – resulting in previously unprecedented query effi- implemented in PyTorch in under 20 lines of code1 — we. |

|

TnT Attacks! Universal Naturalistic Adversarial Patches Against

the network to make a malicious decision (targeted attack). Interestingly now |

|

Can Targeted Adversarial Examples Transfer When the Source and

17 mars 2021 Dur- ing the online phase of the attack we then leverage repre- sentations of highly related proxy classes from the whitebox distribution to ... |

|

Foolbox Documentation

23 oct. 2019 Creating a targeted adversarial for the Keras ResNet model . ... 3.1.1 Running a batch attack against a PyTorch model import foolbox. |

|

A Little Robustness Goes a Long Way: Leveraging Robust Features

25 oct. 2021 and representation-targeted adversarial attacks even between architectures as dif- ... experiments |

|

A survey on Kornia: an Open Source Differentiable Computer Vision

21 sept. 2020 Keywords Computer Vision Open Source |

| Torchattacks : A Pytorch Repository for Adversarial Attacks |

What are the adversarial attack baselines for PyTorch?

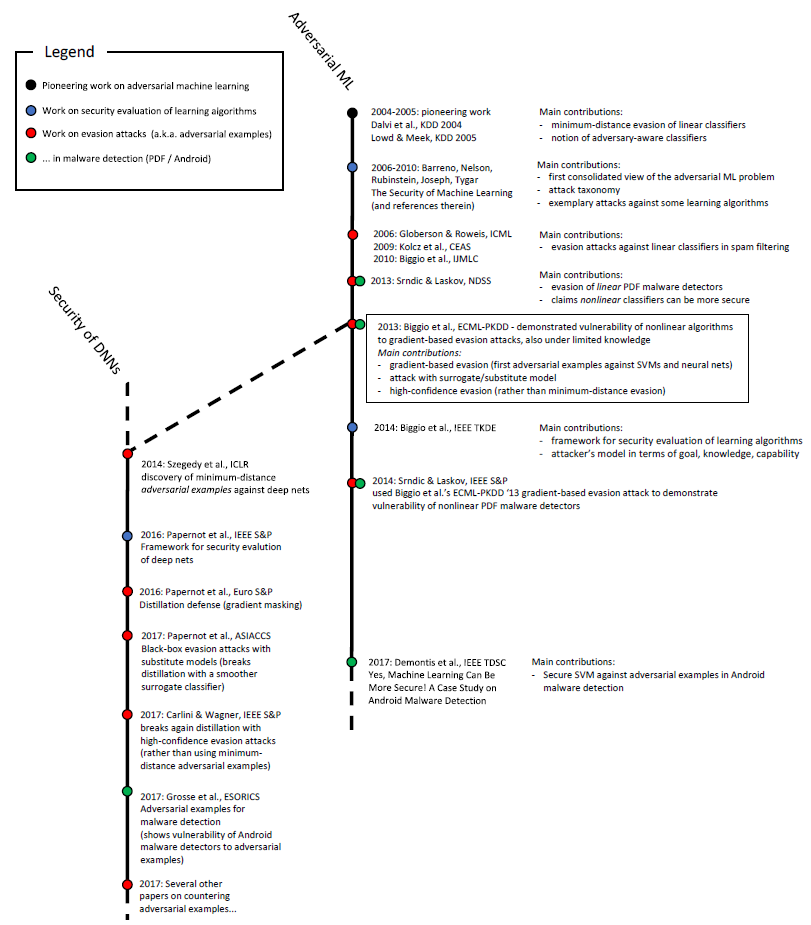

PyTorch adversarial attack baselines for ImageNet, CIFAR10, and MNIST (state-of-the-art attacks comparison) This repository provides simple PyTorch implementations for evaluating various adversarial attacks. This repository shows state-of-the-art attack success rates for each dataset.

What is adversarial patch attack?

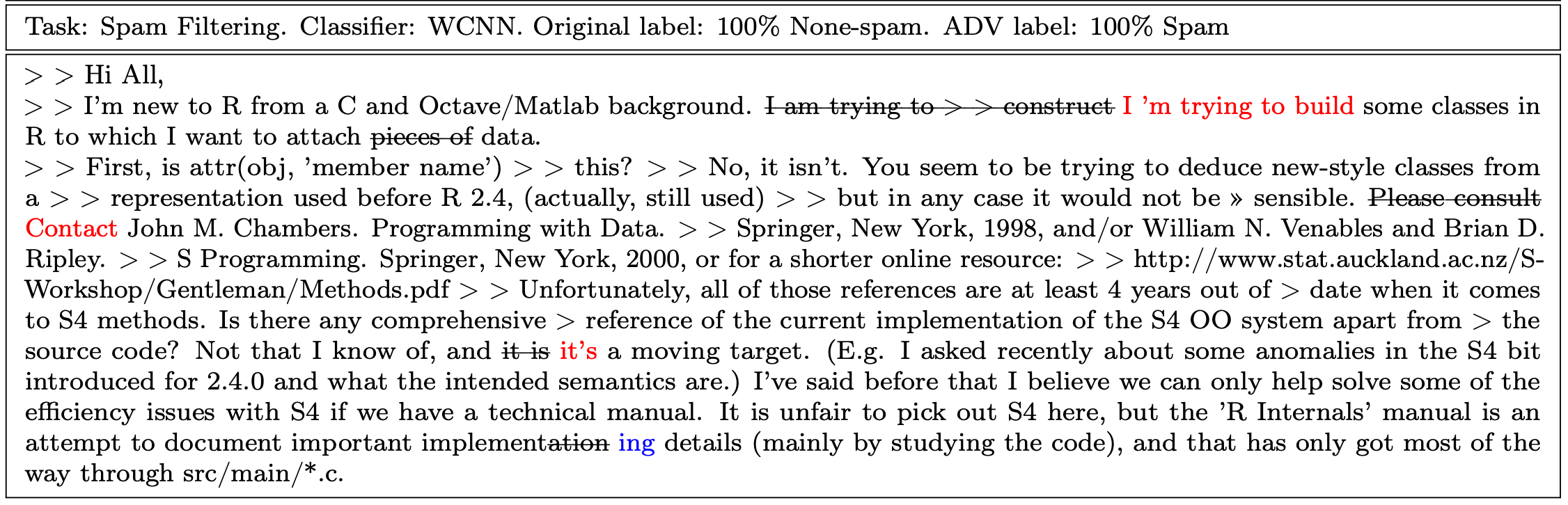

Adversarial patch attack against image classification deep neural networks (DNNs), in which the attacker can inject arbitrary distortions within a bounded region of an image, is able to generate adversarial perturbations that are robust (i.e., remain adversarial in physical world) and universal (i.e., remain adversarial on any input).

Is there a PyTorch implementation for adversarial discriminative domain adaptation?

Failed to load latest commit information. A PyTorch implementation for Adversarial Discriminative Domain Adaptation. I only test on MNIST -> USPS, you can just run the following command: In this experiment, I use three types of network. They are very simple.

What is the adversarial attack method?

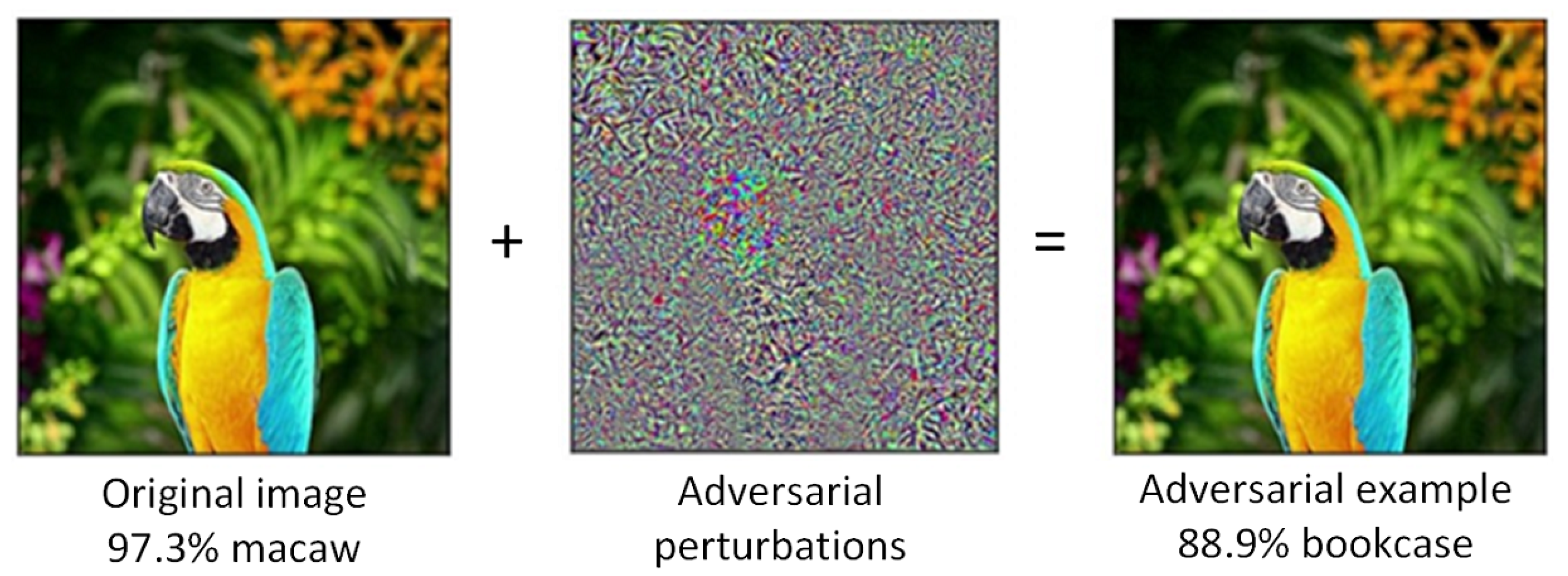

The adversarial attack method we will implement is called the Fast Gradient Sign Method (FGSM). It’s called this method because: We construct the image adversary by calculating the gradients of the loss, computing the sign of the gradient, and then using the sign to build the image adversary

|

BLACK-BOX ADVERSARIAL ATTACK WITH - OpenReviewnet

efficiency of black-box adversarial attack across different target network architec- the source model to attack an unknown target network (Goodfellow et al , 2015; Madry et used in CIFAR10 is from https://github com/prlz77/ResNeXt pytorch |

|

Adversarial training for free - NIPS Proceedings - NeurIPS

of the few defenses against adversarial attacks that withstands strong attacks In what follows, we will use non-targeted adversarial examples both for evaluating 3ImageNet Adversarial Training for Free code in Pytorch can be found here: |

|

Simple Black-box Adversarial Attacks - Proceedings of Machine

adversary to have complete knowledge of the target model, 1Department of Computer implemented in PyTorch in under 20 lines of code1 — we consider our |

|

Towards Understanding and Improving the Transferability of

On the contrary, the white-box attack means that the target model is available to the adversary 2 2 Generating adversarial examples Modeling In general, crafting |

![PDF] Curls \u0026 Whey: Boosting Black-Box Adversarial Attacks PDF] Curls \u0026 Whey: Boosting Black-Box Adversarial Attacks](https://pytorch.org/tutorials/_images/fgsm_panda_image.png)

![PDF] Curls \u0026 Whey: Boosting Black-Box Adversarial Attacks PDF] Curls \u0026 Whey: Boosting Black-Box Adversarial Attacks](https://epdf.pub/assets/img/epdf_logo.png)

![PDF] Adversarial Attacks and Defenses in Images Graphs and Text PDF] Adversarial Attacks and Defenses in Images Graphs and Text](https://media.springernature.com/lw685/springer-static/image/art%3A10.1007%2Fs11042-019-7262-8/MediaObjects/11042_2019_7262_Fig1_HTML.png)