language model

Is GPT a language model?

The GPT models are general-purpose language models that can perform a broad range of tasks from creating original content to write code, summarizing text, and extracting data from documents.

What are examples of language models?

Uses and examples of language modeling

Speech recognition.

This involves a machine being able to process speech audio. Text generation. Chatbots. Machine translation. Parts-of-speech tagging. Parsing. Optical character recognition. Information retrieval.AI language models are a key component of natural language processing (NLP), a field of artificial intelligence (AI) focused on enabling computers to understand and generate human language.

What do you mean by language model?

A language model is a probability distribution over words or word sequences.

Learn more about different types of language models and what they can do.

Written byMór Kapronczay.

Published on Dec.13 déc. 2022

How Large Language Models Work

Create a Large Language Model from Scratch with Python – Tutorial

A Practical Introduction to Large Language Models (LLMs)

|

Universal Language Model Fine-tuning for Text Classification

We propose Universal Language Model. Fine-tuning (ULMFiT) an effective trans- fer learning method that can be applied to any task in NLP |

|

A Neural Probabilistic Language Model

A goal of statistical language modeling is to learn the joint probability function of sequences of words in a language. This is intrinsically difficult because |

|

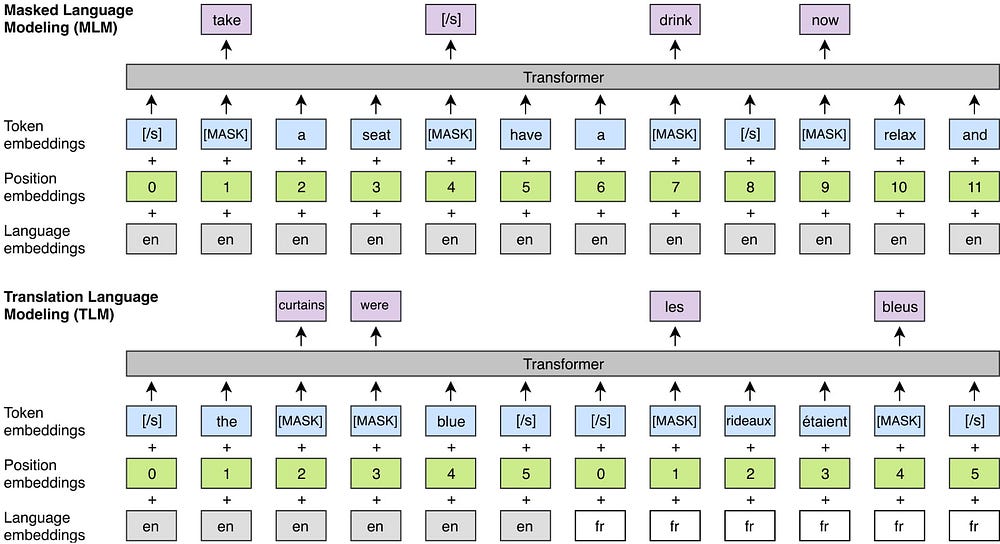

Cross-lingual Language Model Pretraining

We show that cross-lingual language models can provide significant improvements on the perplexity of low-resource languages. 5. We make our code and pretrained |

|

Variable-Length Sequence Language Model for Large Vocabulary

19 oct. 2006 One of the most successful language models used in speech recognition is the n-gram model which assumes that the statistical dependencies ... |

|

When Being Unseen from mBERT is just the Beginning: Handling

Handling New Languages With Multilingual Language Models. Benjamin Muller†*. Antonios Anastasopoulos‡ tation experiments to get usable language model-. |

|

Enhancing lexical cohesion measure with confidence measures

30 nov. 2011 Enhancing lexical cohesion measure with confidence measures semantic relations and language model interpolation for multimedia spoken con-. |

|

Release of largest trained open-science multilingual language

12 juil. 2022 BLOOM is the largest multilingual language model to be trained 100% openly and transparently. AI models of its kind. |

|

Recurrent Neural Network Based Language Model

A new recurrent neural network based language model (RNN. LM) with applications to speech recognition is presented. Re- sults indicate that it is possible |

|

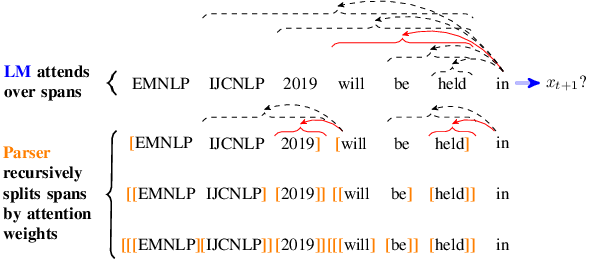

N-gram Language Models

language model. LM ities to sentences and sequences of words the n-gram. An n-gram is a sequence n-gram of n words: a 2-gram (which we'll call bigram) is a |

|

Language Modelling for Speech Recognition

Language models (LMs) assign a probability estimate P(W) to word sequences W Language model probabilities P(W) are usually incorporated into the ASR |

|

Recurrent Neural Networks (RNN)

How to build a neural Language Model? • Recall the Language Modeling task: • Input: sequence of words • Output: prob dist of the |

|

Chapitre 52 : Modèle de langue et RI Language model - IRIT

Modèle vraisemblance de la requête (Query Likelihood) – Références Modèle de langue • Modèle de langue/language Model (modèle statistique de langue) |

|

Probabilistic Language Models 10

A language model is a probability distribution for random variable X, which takes values in V† (i e , sequences in the vocabulary that end in ) Therefore, a language model defines p : V† → R such that: ∀x ∈ V† |

|

A Neural Probabilistic Language Model - Journal of Machine

A goal of statistical language modeling is to learn the joint probability function of sequences of words in a language This is intrinsically difficult because of the |

|

A Neural Language Model for Dynamically Representing the

ant of RNN language model outperformed the baseline model Furthermore, the ex- periments also demonstrate that dynamic updates of an output layer help a |

|

A Hierarchical Word Sequence Language Model - Association for

Experiments verified the effectiveness of our model 1 Introduction Most language models used for natural language processing, such as n-gram approach |

|

Chapter 7 Language models - Statistical Machine Translation

Language models answer the question: For instance 2-gram language model: p(w1,w2 Recall: maximum likelihood estimation of unigram language model |

|

Are All Languages Equally Hard to Language-Model? - Ryan Cotterell

apart these hypotheses 2 Language Modeling A traditional closed-vocabulary, word-level lan- guage model operates as follows: Given a fixed set of words V |

![Pre-trained language model series topics] - Programmer Sought Pre-trained language model series topics] - Programmer Sought](https://pbs.twimg.com/media/EYbMqdpWsAAYQhC.jpg)