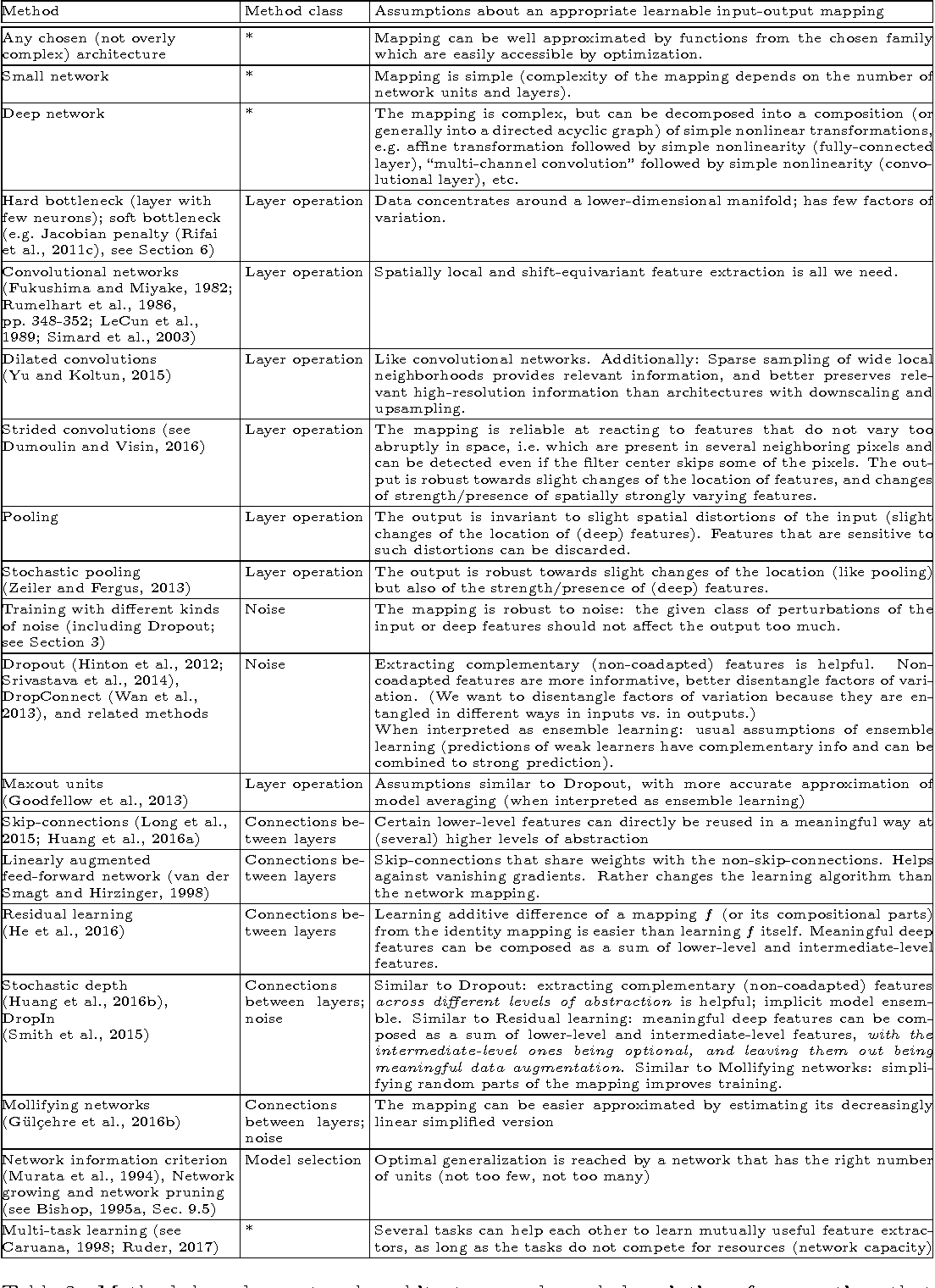

l1 and l2 regularization in deep learning

|

ACHIEVING STRONG REGULARIZATION FOR DEEP NEURAL

L1 and L2 regularizers are critical tools in machine learning due to their ability to simplify solutions. However imposing strong L1 or L2 regularization |

|

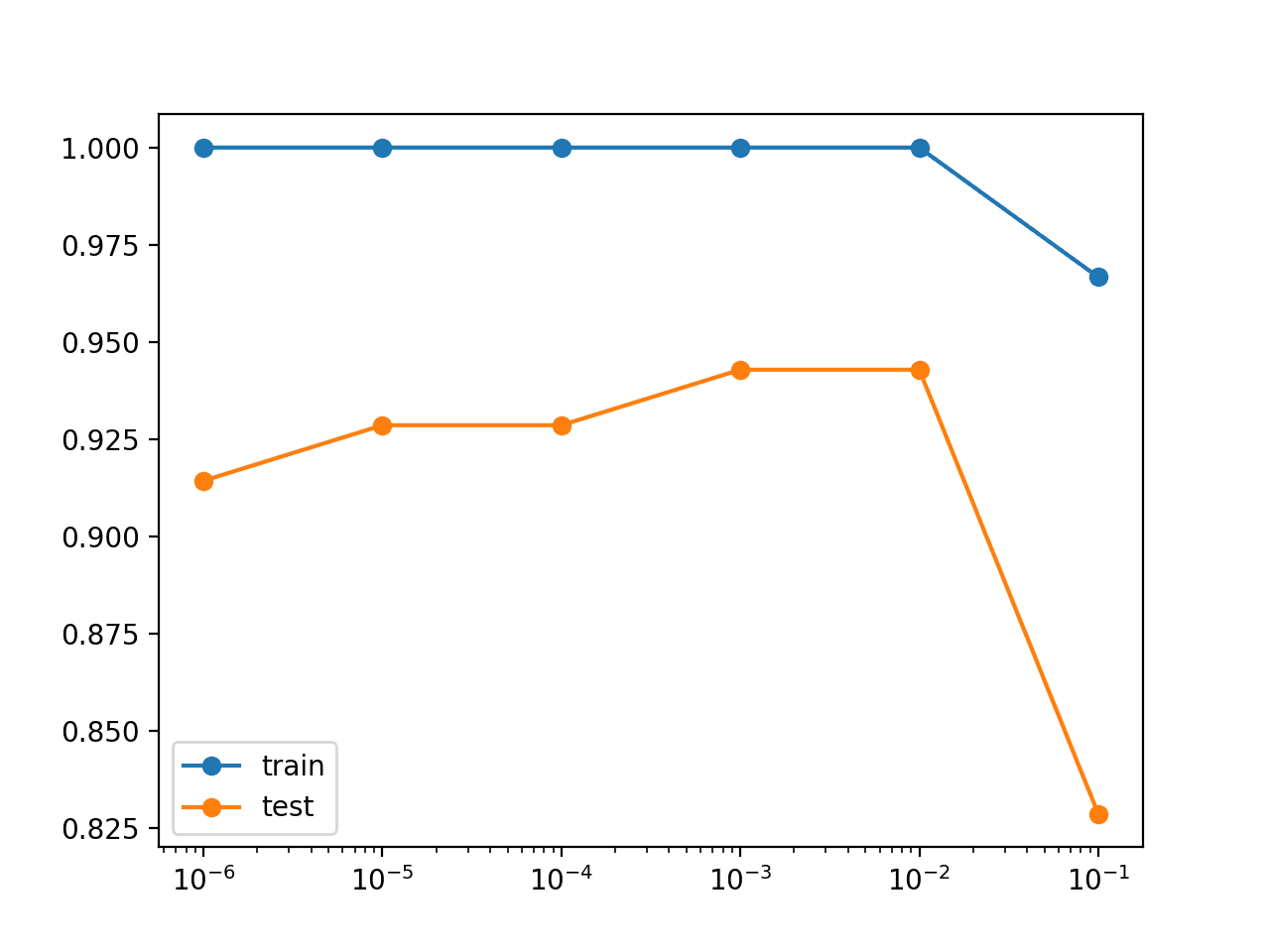

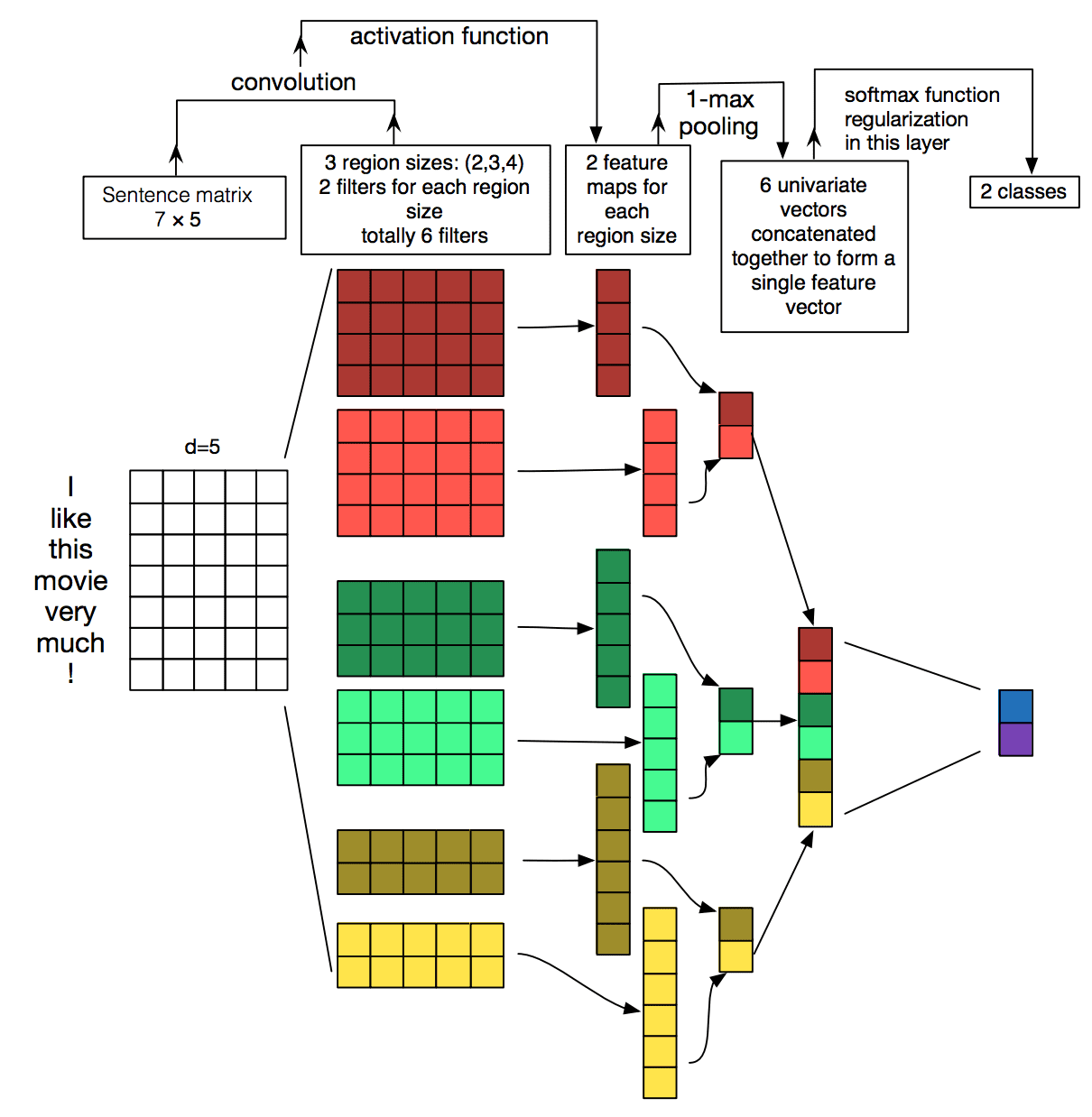

Effect of the regularization hyperparameter on deep learning- based

semantic segmentation with deep learning is demonstrated. methods used to constrain the weights include L1 and L2 regularization which penalize the sum ... |

|

The Efficacy of L1 Regularization in Two-Layer Neural Networks

2 Oct 2020 For example the L1 or L2 risks of typical parametric models such as ... Schmidt-Hieber [2017] proved that specific deep neural networks ... |

|

Gradient-Coherent Strong Regularization for Deep Neural Networks

18 Oct 2019 L1 and L2 regularizers are common regularization tools in machine learning with their simplicity and effectiveness. However we observe that im-. |

|

Shakeout: A New Approach to Regularized Deep Neural Network

13 Apr 2019 statistical trait: the regularizer induced by Shakeout adaptively combines L0 L1 and L2 regularization terms. Our classification. |

|

Deep Neural Network Regularization for Feature Selection in

3 May 2019 niques such as l1 l2 regularization are mainly used to reduce the overfitting and reducing the complexity of deep neural network. |

|

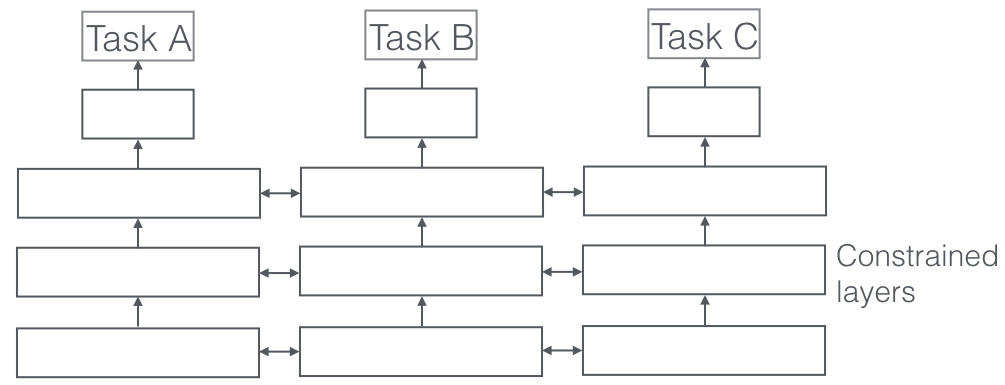

Shakeout: A New Regularized Deep Neural Network Training Scheme

We show that our scheme leads to a combination of L1 regularization and L2 regularization im- posed on the weights which has been proved effective by the. |

|

Adaptive Knowledge Driven Regularization for Deep Neural Networks

2010;. Srebro and Shraibman 2005) regularizes the values of the model parameters similar to L1-norm and L2-norm regular- ization by constraining the norm of |

|

Bridgeout: stochastic bridge regularization for deep neural networks

21 Apr 2018 However the choice of the norm of weight penalty is problem depen- dent and is not restricted to {L1 |

|

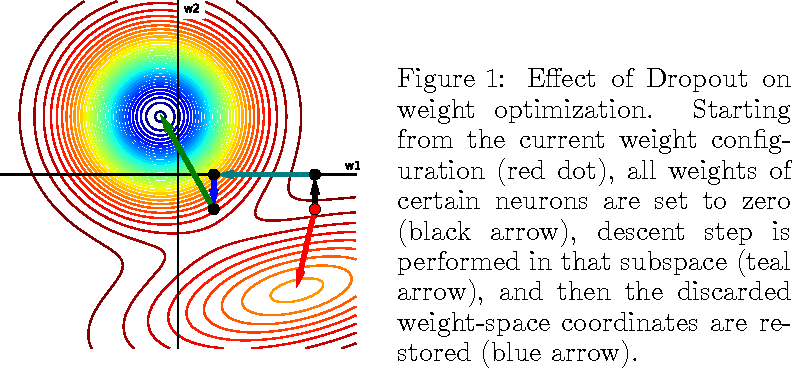

Regularization for Deep Learning - UNIST

Often referred to as weight decay regularizer as optimizing for l2-norm will shrink the value of the variables at each iteration Regularized objective !" # = |

|

Regularization for Deep Learning

While L2 weight decay is the most common form of weight decay there are other ways to penalize the size of the model parameters Another option is to use L1 |

|

Deep learning - Optimization and Regularization in deep networks

6 mar 2021 · 1 Other related forms of L2 regularization include: 1 1 Instead of a soft constraint that w be small one may have prior knowledge that w |

|

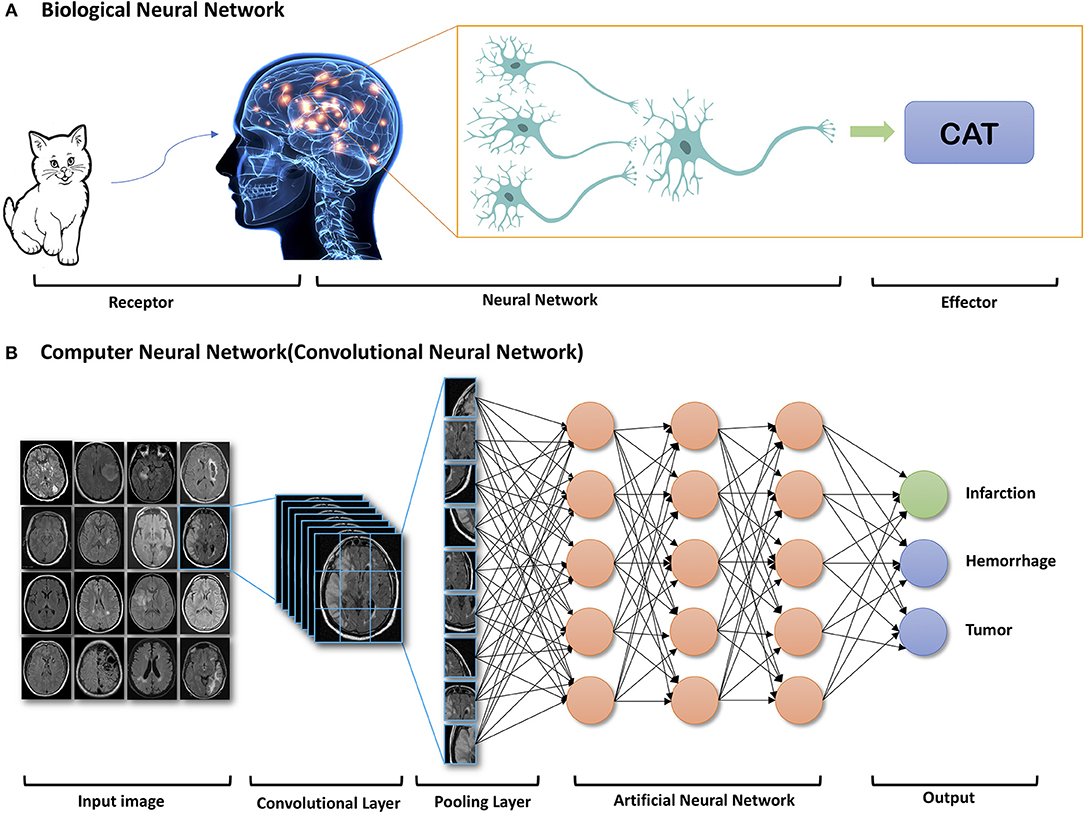

Deep Learning Basics Lecture 3: regularization - csPrinceton

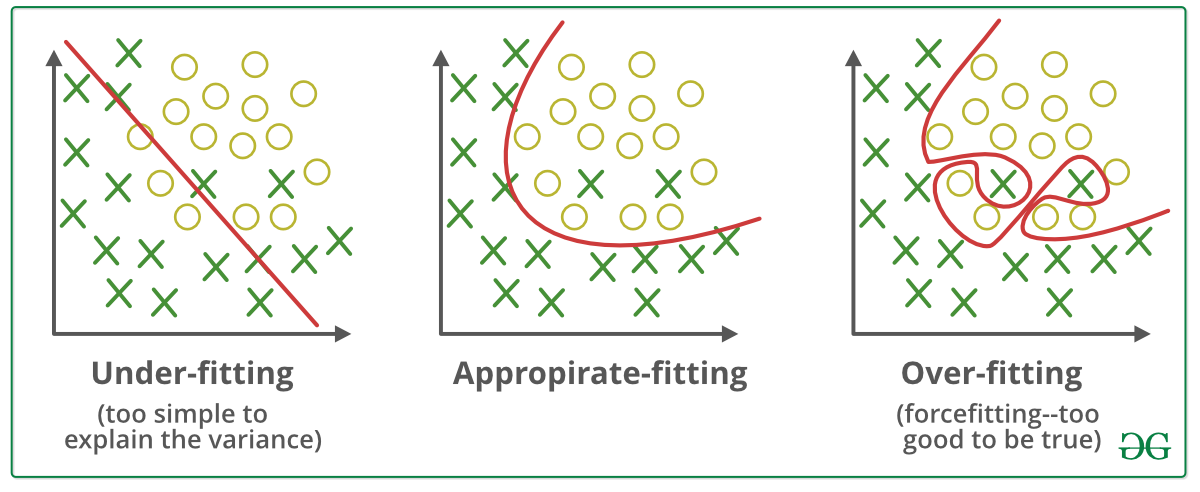

What is regularization? • In general: any method to prevent overfitting or help the optimization • Specifically: additional terms in the training |

|

Regularization in Deep Neural Networks - OPUS at UTS

ity of deep neural networks beyond Dropout via introducing a combination of L0 L1 and L2 regularization effect into the network training |

|

Lecture 2: Overfitting Regularization

L2 and L1 regularization for linear estimators A general HUGELY IMPORTANT problem for all machine learning algorithms |

|

Deep Neural Networks with L1 and L2 Regularization for High

Request PDF Deep Neural Networks with L1 and L2 Regularization for High Dimensional Corporate Credit Risk Prediction Accurate credit risk prediction can |

|

A Deep Learning Regularization Technique using Linear Regression

3 nov 2020 · proposed regularization method: 1) gives major improvements tion methods used in the context of deep learning l2 ? norm |

|

The Efficacy of L1 Regularization in Two-Layer Neural Networks

2 oct 2020 · For example the L1 or L2 risks of typical parametric models such as Schmidt-Hieber [2017] proved that specific deep neural networks |

|

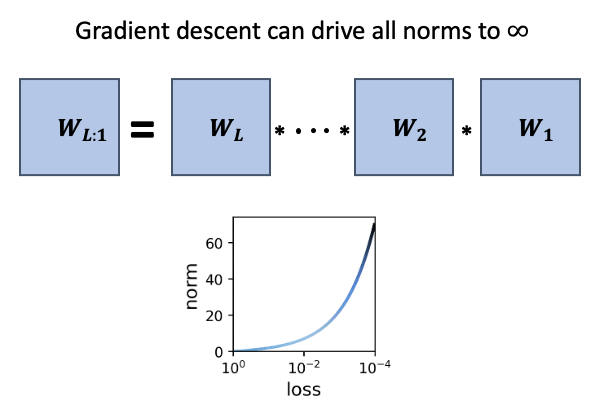

On the training dynamics of deep networks with L2 regularization

such networks and compare the role of L2 regularization in this context with that of linear models 1 Introduction Machine learning models are commonly |

What are L1 and L2 regularization techniques in deep learning?

L1 Regularization, also called a lasso regression, adds the “absolute value of magnitude” of the coefficient as a penalty term to the loss function. L2 Regularization, also called a ridge regression, adds the “squared magnitude” of the coefficient as the penalty term to the loss function.What is L1 and L2 regularization used for?

L1 regularization gives output in binary weights from 0 to 1 for the model's features and is adopted for decreasing the number of features in a huge dimensional dataset. L2 regularization disperse the error terms in all the weights that leads to more accurate customized final models.How does regularization L1 & L2 help neural networks generalize better?

L2 & L1 regularization

Due to the addition of this regularization term, the values of weight matrices decrease because it assumes that a neural network with smaller weight matrices leads to simpler models. Therefore, it will also reduce overfitting to quite an extent.- The Regression model that uses L2 regularization is called Ridge Regression. Formula for Ridge Regression. Regularization adds the penalty as model complexity increases. The regularization parameter (lambda) penalizes all the parameters except intercept so that the model generalizes the data and won't overfit.

|

Deep Neural Network Regularization for Feature - IEEE Xplore

3 mai 2019 · Deep neural network, feature selection, information retrieval, learning-to-rank, niques such as l1, l2 regularization are mainly used to |

|

Regularization - Sebastian Raschka

STAT 479: Deep Learning, Spring 2019 Sebastian •L1/L2 regularization (norm penalties) • Dropout L2 Regularization for Logistic Regression in PyTorch |

|

Regularization for Deep Learning

, or 0 In comparison to L2 regularization,L1 regularization results in a solution that is more sparse Sparsity in this context refers to the fact that |

|

Regularization Methods in Neural Networks - DiVA

2 History of artificial intelligence, machine learning and neural networks common regularization methods are L1, L2, Early stopping and Dropout5 Previous |

|

Regularization in Deep Neural Networks - OPUS at UTS - University

ity of deep neural networks beyond Dropout, via introducing a combination of L0, L1, and L2 regularization effect into the network training Then we considered |

|

Feature selection, L1 vs L2 regularization, and rotational - ICML

with L2 regularization, SVMs, and neural networks ence on Machine Learning, Banff, Canada, 2004 of learning using L1 regularization is found in (Zheng |

|

Lecture 2: Overfitting Regularization

Cross-validation • L2 and L1 regularization for linear estimators Recall: Overfitting • A general, HUGELY IMPORTANT problem for all machine learning |

![PDF] Introduction to Deep Learning : A First Course in Machine PDF] Introduction to Deep Learning : A First Course in Machine](https://machinelearningmastery.com/wp-content/uploads/2018/10/Line-Plot-of-Train-and-Test-Accuracy-with-Hidden-Layer-Noise.png)

![PDF] Sparse $\\ell^q$-regularization of Inverse Problems Using Deep PDF] Sparse $\\ell^q$-regularization of Inverse Problems Using Deep](https://ai.stanford.edu/~kaidicao/pictures/HAR.png)